Recently stumbled upon this article about using OpenAI’s API in an iOS Shortcut, to act as a smart HomeKit Voice Assistant.

Grabbed the Shortcut from the article, and modified it to create two shortcuts that speak OpenAI’s API response:

- a “textual” shortcut, that prompts you a text input

- a “voice” shortcut, that uses Speech-to-Text to read your voice input and convert it to text

The nice part about the textual version is that it can be used also from iOS’s Sharesheet based on text you selected in another app.

Additionally, this can be improved further to simulate a ChatGPT-like experience, by keeping conversational context.

Shortcuts linked at the end of the post.

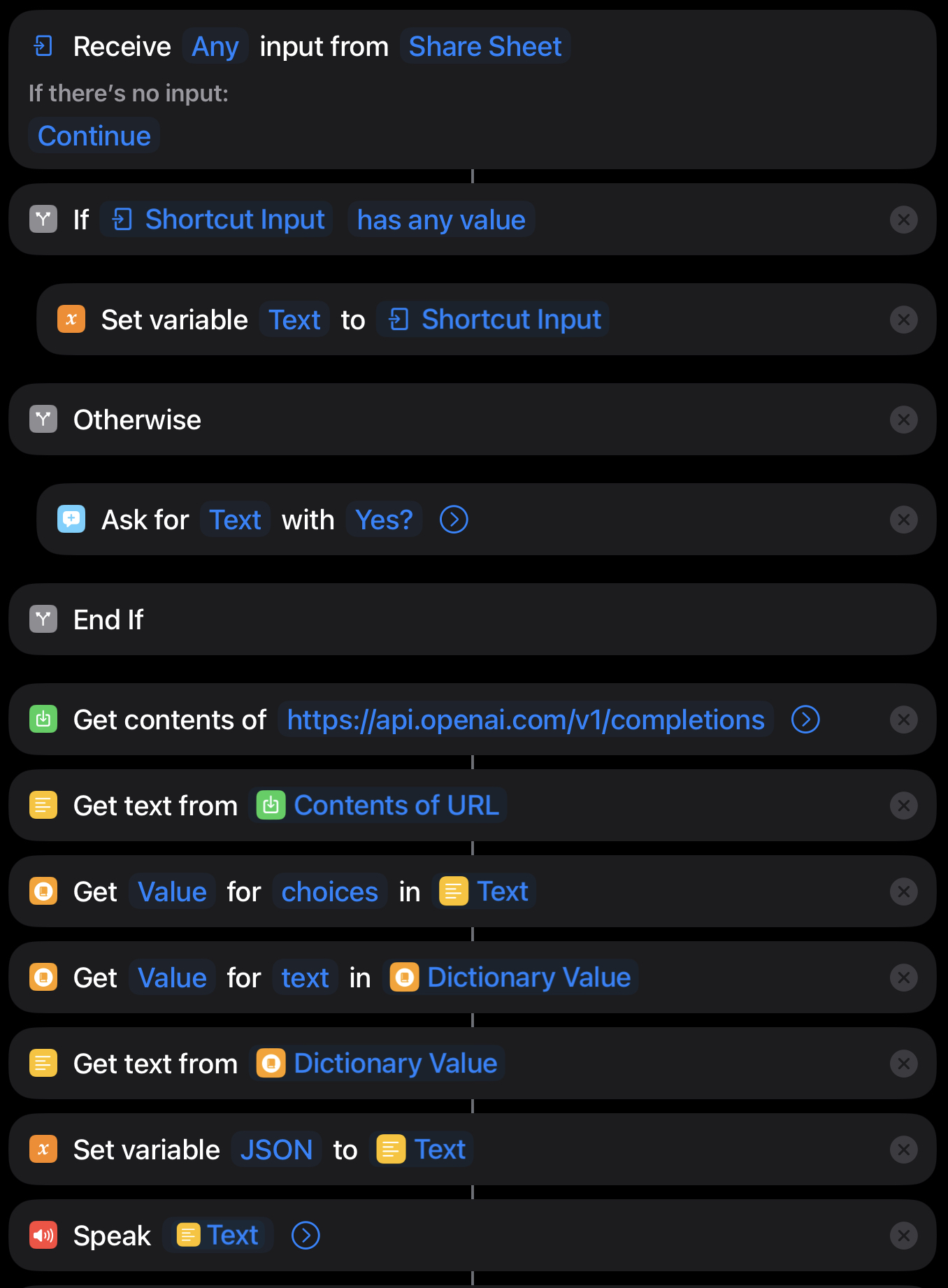

Textual Shortcut

The shortcut is pretty straightforward, it just prompts you for a text input (or reads the Shortcut Input value if called via Share Sheet), and then calls the OpenAI API with the text you entered.

Then it extracts the value from the choices array, the API’s response for the prompt “How are you?” has the following form:

{

"id":"cmpl-6bSFgj3DGgqMri31EJjE3xOy4fiko",

"object":"text_completion",

"created":1674384756,

"model":"text-davinci-003",

"choices": [{

"text": "\n\nI'm doing great, thanks for asking!",

"index":0,

"logprobs":null,

"finish_reason":"stop"

}],

"usage": {

"total_tokens":19,

"completion_tokens":11,

"prompt_tokens":8

}

}In summary, the textual version of the iOS Shortcut looks like this:

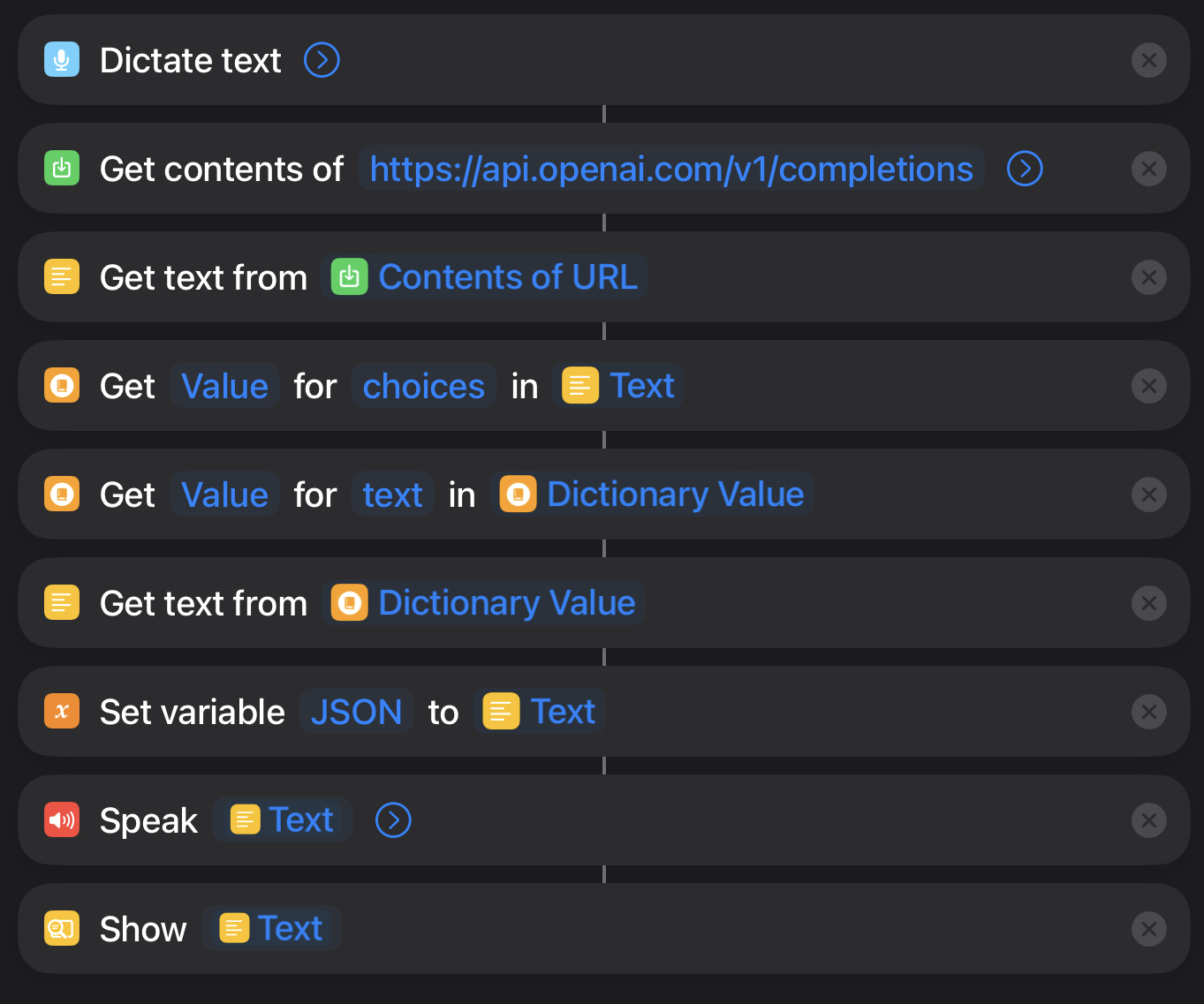

Voice Shortcut

The concept is essentially the same as the textual version, but it uses the Dictate Text action to convert your voice input to text.

Shortcut download

Grab the desired shortcut from the links below, and run it from the Shortcuts app.

You can add them to your Home Screen for quick access too.

Improvements

As mentioned above, the current version of the Shortcut doesn’t keep conversational context, so it’s not really a ChatGPT-like experience.

But I think it can be done if we use the Get Value action to store the previous prompt and response in a variable, and then use the Set Value action to update the variable with the new prompt and response.

Chris

Chris