µcompress is a lovely utility by WebReflection that compresses common static files.

A micro, all-in-one, compressor for common Web files

Using it since commit ce0a9e on this very website, in my GitHub Actions workflow.

10MB overall save

Here some stats about the dramatic change it had on the static assets:

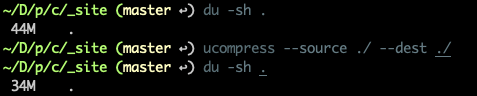

~/D/p/c/_site (master ↩) du -sh .

44M .

~/D/p/c/_site (master ↩) ucompress --source ./ --dest ./

~/D/p/c/_site (master ↩) du -sh .

34M .

It compresses the following assets (taken from the README):

- css files via csso

- gif files via gifsicle

- html files via html-minifier

- jpg or jpeg files via jpegtran-bin

- js files via uglify-es

- png files via pngquant-bin

- svg files via svgo

- xml files via html-minifier

As you can see, I managed to save ~10MB on my _site folder, built with devblog. That’s huge.

Pagespeed says thanks

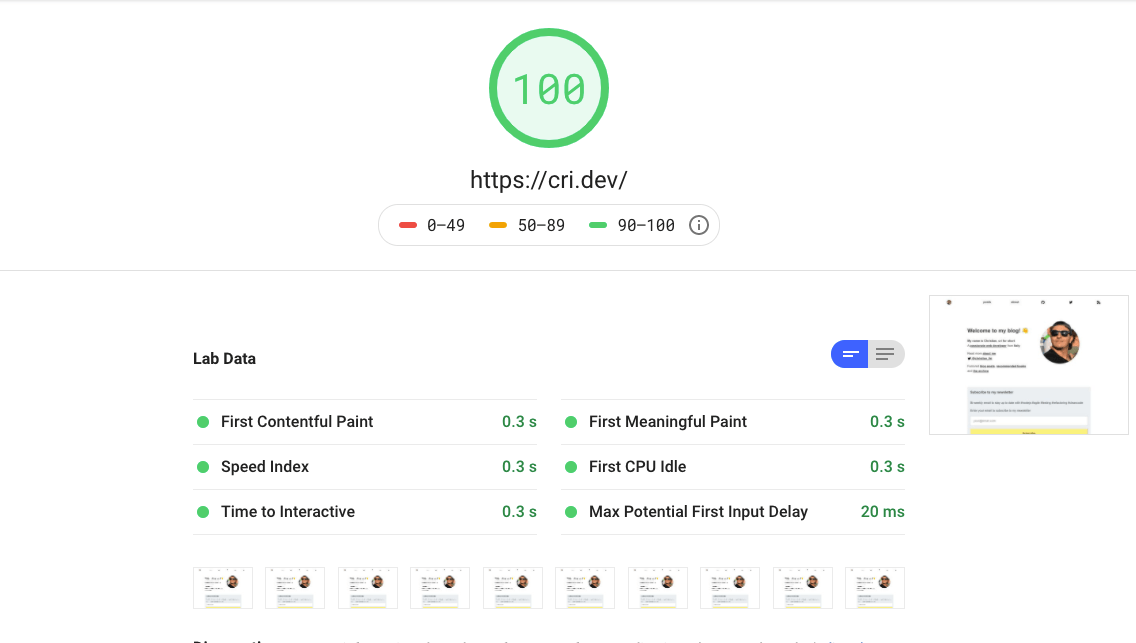

Previously, I manually optimized images with convert and mogrify (from the imagemagick family). Through Cloudflare, the assets where compressed and optimized.

That was it.

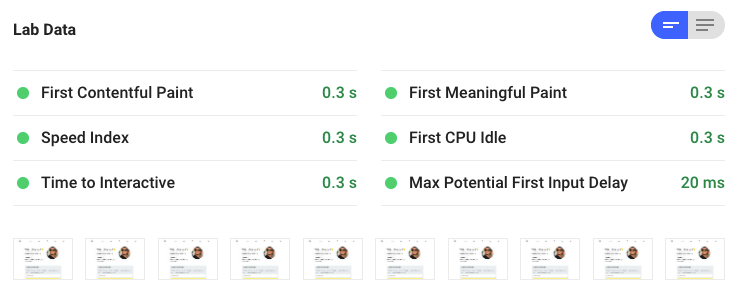

And I already had a pretty decent score on pagespeed insights:

Optimized assets

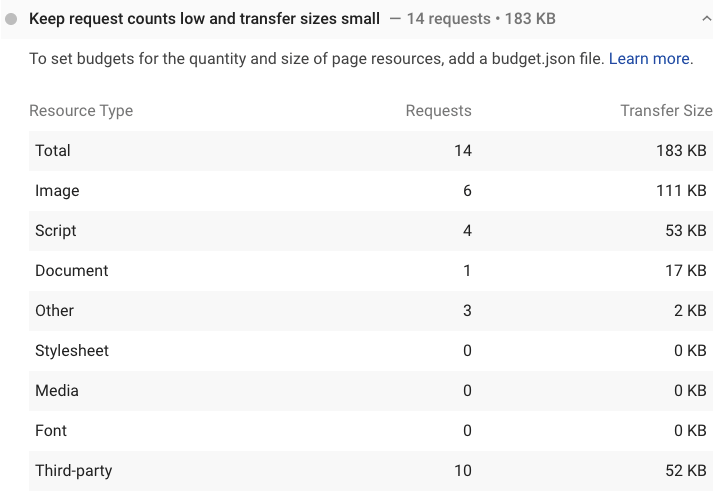

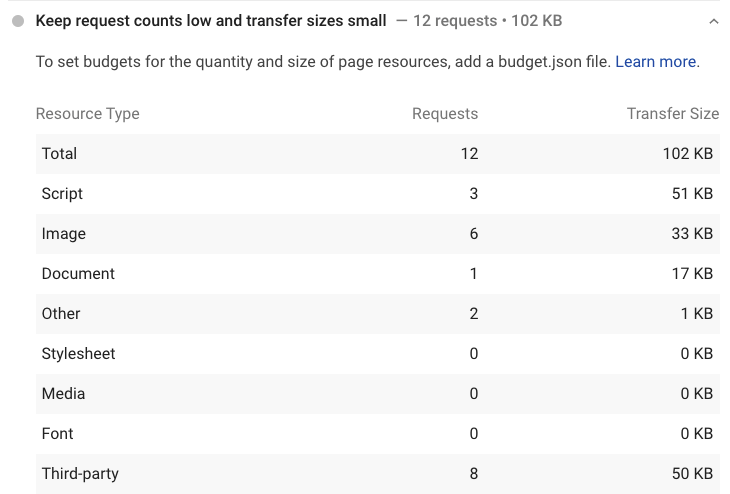

This were the assets loaded for the homepage of cri.dev:

And after using ucompress:

I have around 400+ compiled assets (using devblog) and ~150 images for this blog.

~/D/p/c/_site (master ↩) find . -type f | wc -l

417

~/D/p/c/_site (master ↩) find . -type f | grep images | wc -l

147Using ucompress saves me a bit of time when synching the assets from the _site folder to my S3 bucket and the overhead of running it on the CI is negligible.

Use it!

There are no excuses to optimize your static files now.

npx ucompress --help

# or install globally

npm i -g ucompressI am using it this way on my GitHub Actions workflow:

- first i build the blog with devblog

- optimize the assets with

npx ucompress --source ./_site --dest ./_site(see themain.ymlworkflow file) - run UAT with cypress

- sync S3 bucket

- optionally clear cloudflare cache

- send myself a telegram message once done

Thank you @WebReflection for ucompress ✌️

Chris

Chris